Showing posts with label Russ McRee. Show all posts

Showing posts with label Russ McRee. Show all posts

Sunday, December 21, 2014

2014 Toolsmith Tool of the Year

If your browser doesn't support IFRAMEs, you can vote directly here.

Friday, March 01, 2013

toolsmith: Redline, APT1, and you – we’re all owned

Prerequisites/dependencies

Windows OS and .NET 4

Introduction

Embrace this simple fact, we’re all owned. Maybe you

aren’t right now, but you probably were at some point or will be in the future.

“Assume compromise” is a stance I’ve long embraced, if you haven’t climbed

aboard this one-way train to reality, I suggest you buy a ticket. If headlines

over the last few years weren’t convincing enough, Mandiant’s APT1, Exposing One of China’s Cyber

Espionage Units report should serve as your re-education. As richly

detailed, comprehensive, and well-written as it is, this report is

groundbreaking in the extent of insights on our enemy it elucidates, but not necessarily

as a general concept. Our adversary has been amongst us for many, many years

and the problem will get much worse before it gets better. They are all up in

your grill, people; your ability to defend yourself and your organizations, and

to hunt freely and aggressively is the new world order. I am reminded, courtesy

of my friend TJ O’Connor, of a most relevant Patton quote: "a violently

executed plan today is better than a perfect plan expected next week." Be

ready to execute. Toolsmith has spent six and half years hoping to enable you,

dear reader, to execute; take the mission to heart now more than ever.

I’ve covered Mandiant tools before for good reason: RedCurtain in 2007,

Memoryze in 2009,

and Highlighter in 2011. I stand

accused of being a fanboy and hereby

render myself guilty. If you’ve read the APT1 report you should have taken

immediate note of the use of Redline and Indicators of Compromise (IOCs) in

Appendix G.

Outreach to Richard Bejtlich, Mandiant’s CSO, quickly

established goals and direction: “Mandiant

hopes that our free Redline tool will help incident responders find intruders

on their network. Combining indicators from the APT1 report with Redline’s

capabilities gives responders the ability to look for interesting activity on

endpoints, all for free.” Well in keeping with the toolsmith’s love of free

and open source tools, this conversation led to an immediate connection with

Ted Wilson, Redline’s developer, who kindly offered his perspective:

“Working side by

side with the folks here at Mandiant who are out there on the front lines every

day is definitely what has driven Redline’s success to date. Having direct access to those with firsthand

experience investigating current attack methodologies allows us stay ahead of a

very fast moving and quickly evolving threat landscape. We are in an exciting time for computer

security, and I look forward to seeing Redline help new users dive headfirst

into computer security awareness.

Redline has a

number of impressive features planned for the near future. Focusing first on expanding the breadth of

live response data Redline can analyze.

Some highlights from the next Redline release (v1.8) include full file

system and registry analysis capabilities, as well as additional filtering and

analysis tools around the always popular Timeline feature. Further out, we hope to leverage that

additional data to provide expanded capabilities that help both the novice and

the expert investigators alike.”

Mandiant’s Lucas Zaichkowsky, who will have presented on

Redline at RSA by the time you read this, sums up Redline’s use cases

succinctly:

1. Memory

analysis from a live system or memory image file. Great for malware analysis.

2. Collect

and review a plethora of forensic data from hosts in order to investigate an

incident. This is commonly referred to as a Live IR collector.

3. Create

an IOC search collector to run against hosts to see if any IOCs match.

He went further to indicate that while the second

scenario is the most common use case, in light of current events (APT1), the

third use case has a huge spotlight on it right now. This is where we’ll focus

this discussion to utilize the APT1 IOC files and produce a collector to

analyze an APT1 victim.

Installation and

Preparation

Mandiant provides quite a bit of material regarding

preparation and use of Redline including an extensive user guide, and two

webinars well

worth taking the time to watch. Specific to this conversation however, with

attention to APT1 IOCs, we must prepare Redline for a targeted Analysis Session. The concept here is

simple: install Redline on an analysis workstation and prepare a collector for

deployment to suspect systems.

To begin, download the entire Digital Appendix & Indicators archive

associated with the APT1 report.

Wesley McGrew (McGrew Security) put together a great blog

post regarding matching APT1 malware names to publicly available malware samples

from VirusShare (which is now the malware sample

repository). I’ll analyze a compromised host with one of these samples but

first let’s set up Redline.

I organize my Redline file hierarchy under \tools\redline with individual

directories for audits, collectors, IOCs, and sessions.

I copied Appendix G (Digital) – IOCs from the above mentioned download to APT1 under \tools\redline\IOCs.

Open Redline, and select Create

a Comprehensive Collector under Collect

Data. Select Edit Your Script

and enable Strings under Process Listing and Driver Enumeration, and be sure to

check Acquire Memory Image as

seen in Figure 1.

|

| Figure 1: Redline script configuration |

I saved the collector as

APT1comprehensive. These steps will add a lot of time to the collection

process but will pay dividends during analysis. You have the option to build an

IOC Search Collector but by default

this leaves out most of the acquisition parameters selected under Comprehensive Collector. You can (and

should) also add analysis inclusive of the IOCs after acquisition during the Analyze Data phase.

Redline, IOCs, and

a live sample

I grabbed the binary 034374db2d35cf9da6558f54cec8a455

from VirusShare, described in Wesley’s post as a match for BISCUIT malware. BISCUIT

is defined in Appendix C – The Malware

Arsenal from Digital Appendix &

Indicators as a backdoor with all the expected functionality including

gathering system information, file download and upload, create or kill

processes, spawn a shell, and enumerate users.

I renamed the binary gc.exe,

dropped it in C:\WINDOWS\system32, and executed it on a virtualized lab victim.

I rebooted the VM for good measure to ensure that our little friend from the

Far East achieved persistence, then copied the collector created above to the

VM and ran RunRedlineAudit.bat.

If you’re following along at home, this is a good time for a meal, walking the

dog, and watching The Walking Dead episode you DVR’d (it’ll be awhile if you

enabled strings as advised). Now sated, exercised, and your zombie fix pulsing

through your bloodstream, return to your victim system and copy back the

contents of the audits folder

from the collector’s file hierarchy to your Redline analysis station, select From a Collector under Analyze Data, and choose the copied audit as seen in Figure 2.

|

| Figure 2: Analyze collector results with Redline |

Specify where you’d like to save

your Analysis Session (D:\tools\redline\sessions if you’re following my logic).

Let Redline crunch a bit and you will be rewarded with instant IOC goodness.

Right out of the gate the report details indicated that “2 out of my 47 Indicators

of Compromise have hit against this session.”

Sweet, we see a file hash hit

and a BISCUIT family hit as seen in Figure 3.

|

| Figure 3: IOC hits against the Session |

Your results will also be

written out to HTML automatically. See Report

Location at the bottom of the Redline UI. Note that the BISCUIT family

hit is identified via UID a1f02cbe. Search a1f02cbe under your IOCs repository

and you should see a result such as D:\tools\redline\IOCs\APT1\a1f02cbe-7d37-4ff8-bad7-c5f9f7ea63a3.ioc.

Open the .ioc in your

preferred editor and you’ll get a feel for what generates the hits. The most

direct markup match is:

In the Redline UI, remember to

click the little blue button with the embedded i (information) associated with

IOC hit for highlights on the specific IndicatorItem

that triggered the hit for you and displays full metadata specific to the file,

process, or other indicator.

But wait, there’s more. Even

without defined, parameterized IOC definitions, you can still find other solid

indicators on your own. I drilled into the Processes

tab, and selected gc.exe,

expanded the selection and clicked Strings. Having studied Appendix D – FQDNs, and

checked out the PacketStash APT1.rules file

for Suricata and Snort (thanks, Snorby Labs), I went hunting (CTRL-F in the

Redline UI) for strings matches to the known FQDNs. I found 11 matches for

purpledaily.com and 28 for newsonet.net as seen in Figure 4.

|

| Figure 4: Strings yields matches too |

Great! If I have alert udp $HOME_NET any -> $EXTERNAL_NET

53 (msg:"[SC] Known APT1 domain (purpledaily.com)";

content:"|0b|purpledaily|03|com|00|"…snipped enabled on my sensors I should see

all the other systems that may be

pwned with this sample.

Be advised that the latest

version of Redline (1.7 as this was written) includes powerful, time-related

filtering options including Field Filters, TimeWrinkle, and TimeCrunch. Explore

them as you seek out APT1 attributes. There are lots of options for analysis. Read the Redline Users Guide before

beginning so as to be full informed. J

In Conclusion

I’m feeling overly dramatic right now. Ten years now I’ve

been waiting for what many of us have known or suspected all along to be blown

wide open. APT1, presidential decrees, and “it’s not us,” oh my. Mandiant has

offered both the fodder and the ammunition you need to explore and inform, so

awake! I’ll close with a bit of the Bard (Ariel, from The Tempest):

While you here do

snoring lie,

Open-ey'd

Conspiracy

His time doth take.

If of life you keep

a care,

Shake off slumber,

and beware.

Awake, awake!

I am calling you to action and begging of your wariness;

your paranoia is warranted. If in doubt of the integrity of a system, hunt!

There are entire network ranges that you may realize you don’t need to allow access to or from your

network. Solution? Ye olde deny statement (thanks for reminding me, TJ). Time

for action; use exemplary tools such as Redline to your advantage, where

advantages are few.

Ping me via email if you have questions or suggestions

for topic via russ at holisticinfosec dot org or hit me on Twitter @holisticinfosec.

Cheers…until next month.

Acknowledgements

To the good folks at

Mandiant:

Ted Wilson, Redline developer

Richard Bejtlich, CSO

Kevin Kin and Lucas

Zaichkowsky, Sales Engineers

Thursday, March 01, 2012

toolsmith: Pen Testing with Pwn Plug

Prerequisites

4GB SD card (needed for installation)

Is just the way that we are tied in

But there's no one home

I grieve for you –Peter Gabriel

Introduction

As you likely know by now given toolsmith’s position at

the back of the ISSA Journal, March’s theme is Advanced Threat Concepts and

Cyberwarfare. Well, dear reader, for your pwntastic reading pleasure I have

just the topic for you. The Pwn Plug can be considered an advanced threat and

useful in tactics that certainly resemble cyberwarfare methodology. Of course,

those of us in the penetration testing discipline would only ever use such a

device to the benefit of our legally engaged targets.

A half year ago I read about the Pwn Plug when it was

offered in partnership with SANS for students taking vLive versions of SEC560: Network

Penetration Testing and Ethical Hacking or SEC660: Advanced Penetration

Testing, Exploits, and Ethical Hacking. It seemed very intriguing, but I’d

already taken the 560 track, and was immersed in other course work. Then a

couple of months ago I read that Pwnie Express had released the Pwn Plug

Community Edition and was even more intrigued but I had a few things I planned

to purchase for the lab before adding a Sheevaplug to the collection.

But alas, the small world clause kicked in, and Dave

Porcello (grep) and Mark Hughes from Pwnie Express, along

with Peter LaPlante emailed to ask if I’d like to review a Pwn Plug.

The answer to that which you, dear readers, know to be a

rhetorical question goes without saying.

Here’s the caveat. For toolsmith I’ll only discuss

offering that are free and/or open source. Pwn Plug Community Edition meets

that standard, but the Pwnie Express team provided me with a Pwn Plug Elite for

testing. As such, for this article, I will discuss only the features freely

available in the CE to anyone who owns a Sheevaplug: “Pwn Plug Community

Edition does not include the web-based Plug UI, 3G/GSM support, NAC/802.1x

bypass.”

For those of you interested in a review of the remaining

features exclusive to commercial versions, I’ll post it to my blog on the heels

of this column’s publishing.

Dave provided me with a few insights including the Pwn

Plug's most common use cases:

·

Remote, low-cost pen testing: penetration test

customers save on travel expenses, service providers save on travel time

·

Penetration tests with a focus on physical security

and social engineering

·

Data leakage/exfiltration testing: using a

variety of covert channels, the Pwn Plug is able to tunnel through many IDS/IPS

solutions and application-aware firewalls undetected

·

Information security training: the Pwn Plug

touches on many facets of information security (physical, social & employee

awareness, data leakage, etc.), thus making it a comprehensive (and fun!)

learning tool

One of Pwnie Express’ favorite success stories comes from

Jayson Street (The Forbidden Network) who was hired by a large bank to conduct

a physical/social penetration test on ten bank branch offices. Armed with a Pwn

Plug and a bit of social engineering finesse, Jayson was able to deploy a Pwn

Plug to four out of four branch offices attempted against before the client decided

to cut their losses and end the test early. In one instance, a branch manager

actually directed Jayson to connect the Pwn Plug underneath his desk. Pwnie

Express hopes the Pwn Plug helps illustrate how critical physical security and

employee awareness are and Jayson’s efforts delivered exactly that to his

enterprise client.

Adrian Crenshaw (Irongeek) has Jayson’s Derbycon 2011

presentation video posted on his site. It’s well worth your time to watch it.

In addition to the Pwn Plug there is also the Pwn Phone which

is also capable of full-scale wireless penetration testing. Penetration testers

and service providers often utilize the Pwn Phone for proposal meetings and

demonstrations as the "wow factor" is high. As with Pwn Plug, if you

already own or can acquire a Nokia N900 you can download the community edition

of Pwn Phone and get after it right away.

PwnPlug compatibility is currently limited to Sheevaplug

devices. There has been little demand so far for the Guruplug/Dreamplug form

factors and the Guruplug hardware has a history of overheating while the Dreamplug

is quite bulky and flashy. Bulky and flashy do not equate to good resources for

physical & social testing. The development team is working on a trimmed down of Pwn Plug for the $25 Pogoplug. Even

though it only offers about half the performance and capacity of the Sheeva,

with a larger board, it is only $25.

Figure 1 is a picture taken of the Pwn Plug I was sent

for testing. You can see what we mean by the importance of form factor. It’s

barely bigger that a common wall wart and you can use the included cord or plug

it in straight to the wall. Pwnie Express included a couple of sticker options

for the Sheeva. I chose what looks to be a very typical bar code and

manufacturer sticker that even has a PX part number. I chuckle every time I

look at it.

|

| Figure 1: Who, me? |

With Sheevaplugs typically sporting a 1.2Ghz ARM

processor, 512M SDRAM, and 512M NAND Flash configuration it’s recommended that

you don’t treat the device like a work horse (no Fastttack, Autopwn, or

password cracking) but it’s crazy good for maintaining access in stealth mode,

reconnaissance, sniffing, exploitation, and pivoting off to other victim hosts.

Figure you’ll find the 512M storage at about 70% of capacity after installation

but adding SD storage means you can add software within reason. Pwn Plug is

Ubuntu underneath so apt-get is

still your friend.

The tool list for a device this small is impressive.

Expect to find MSF3, dsniff, fasttrack, kismet, nikto, ptunnel, scapy and many

others at you command, most of which can be called right from the prompt

without changing directories.

Installation

To install Pwn Plug CE to a stock Sheevaplug download the

JFFS2 and

follow the instructions. No

need to reinvent the wheel here.

Pwning with

PwnPlug

To ensure full understanding for

those who may not think in evil mode or conduct penetration testing activity,

here’s a quick executive summary followed by the longer play:

Sneak a Pwn Plug into a physical

location, plug it in, and properly configured it phones home allowing you

reverse shell access via a number of possible stealth modes. You can then set

up a variety of exploit activities and/or run scanners or do specific social

engineering activity I am about to demonstrate. The results are collected on

the device and you can then collect them over the established shell access.

First, imagine the Pwn Plug hidden at the target site,

lurking amongst all the other items usually plugged in to a power strip, hiding

behind a desk in so innocuous a fashion so as to go easily undetected. Figure 2

will send you scurrying about your workplace to ensure there are none in hiding

as we speak.

|

| Figure 2: The Pwn Plug looking so innocent |

I’ll walk through an extremely fun example with Pwn Plug

but first you’ll need to ensure access. Commercial Pwn Plug users benefit from

the Plug UI but those rolling their own with Pwn Plug CE can still phone home.

Have a favorite flavor of reverse shell pwnzorship? Plain old reverse SSH is

available or shell over DNS, HTTP, ICMP, SSL, or via 3G if you have the likes

of an O2 E160.

The supporting scripts for reverse shell on the Pwn Plug

are found in /var/pwnplug/scripts.

On your SSH receiver (Backtrack 5 recommended) I suggest

checking out the PwnieScripts for Pwnie Express from Security Generation. @securitygen

even has a method for setting up reverse SSH over Tor. I

configured the Pwn Plug for HTTP because who doesn’t allow HTTP traffic outbound?

J

|

| Figure 3: Have shell, will pwn |

Access established, time to

pwn. One of my all-time favorite collections of mayhem is the Social Engineer

Toolkit (SET).

You will find SET at /var/pwnplug/set.

Change directories appropriately via your established shell and run ./set.

You will be presented with the SET menu. I chose 2. Website Attack Vectors, then 3. Credential Harvester Attack Method followed by 2. Site Cloner (SET supports both HTTP

and HTTPS). In an entirely intentional twist of irony I

submitted http://mail.ccnt.com/igenus/login.php to SET as the URL to clone. Mind

you, this is not a hack of the actual site being cloned so much as it is

harvesting credentials via an extremely accurate replica wherein usernames and

passwords are posted back to the Pwn Plug.

The test Pwn Plug was set up

in the HolisticInfoSec Lab with an IP address of 192.168.248.23.

Imagine I’ve sent the victim

a URL with http://192.168.248.23 hyperlinked

as opposed to http://mail.ccnt.com/igenus/login.php and enticed them into

clicking. Now don’t blink or you’ll miss it; I froze it for you in Figure 4.

|

| Figure 4: SET harvesting from Pwn Plug |

All

the while, because you have shell access, you can gather results at your

discretion. SET has a nice report generator and writes out to XML or HTML.

This is the tip of the

iceberg for SET, and a mere fraction of the chaos you can unleash in whisper

quiet mode via Pwn Plug. There are simply too many options to do it much

justice in such short word space so as mentioned earlier I’ll continue the

conversation on the HolisticInfoSec blog.

In Conclusion

I had a blast testing Pwn Plug, this is me after spending

days doing so.

Ping me via email if you have questions (russ at

holisticinfosec dot org).

Cheers…until next month.

Acknowledgements

Saturday, October 15, 2011

Presenting OWASP Top 10 Tools & Tactics at ISSA International

The ISSA International Conference is coming up this week in Baltimore; I'll be presenting OWASP Top 10 Tools and Tactics based on work for the InfoSecInstitute article of the same name.

If you're in Baltimore and planning to attend, stop by Friday, October 21 at 2:20pm in Room 304.

I'll be discussing and demonstrating tools such as Burp Suite, Tamper Data, ZAP, Samurai WTF, Watobo, Watcher, Nikto, and others as well as tactics for their use as part of SDL/SDLC best practices.

If you’ve spent any time defending web applications as a security analyst, or perhaps as a developer seeking to adhere to SDLC practices, you have likely utilized or referenced the OWASP Top 10. Intended first as an awareness mechanism, the Top 10 covers the most critical web application security flaws via consensus reached by a global consortium of application security experts. The OWASP Top 10 promotes managing risk in addition to awareness training, application testing, and remediation. To manage such risk, application security practitioners and developers need an appropriate tool kit. This presentation will explore tooling, tactics, analysis, and mitigation.

Hope to see you there.

Cheers.

If you're in Baltimore and planning to attend, stop by Friday, October 21 at 2:20pm in Room 304.

I'll be discussing and demonstrating tools such as Burp Suite, Tamper Data, ZAP, Samurai WTF, Watobo, Watcher, Nikto, and others as well as tactics for their use as part of SDL/SDLC best practices.

If you’ve spent any time defending web applications as a security analyst, or perhaps as a developer seeking to adhere to SDLC practices, you have likely utilized or referenced the OWASP Top 10. Intended first as an awareness mechanism, the Top 10 covers the most critical web application security flaws via consensus reached by a global consortium of application security experts. The OWASP Top 10 promotes managing risk in addition to awareness training, application testing, and remediation. To manage such risk, application security practitioners and developers need an appropriate tool kit. This presentation will explore tooling, tactics, analysis, and mitigation.

Hope to see you there.

Cheers.

Sunday, June 06, 2010

Book Review: ModSecurity Handbook

In January I reviewed Magnus Mischel's ModSecurity 2.5.

While Magnus' work is admirable, I'd be remiss in my duties were I not to review Ivan Ristic's ModSecurity Handbook.

Published as the inaugural offering from Ristic's own Feisty Duck publishing, the ModSecurity Handbook is an important read for ModSecurity fans and new users alike. Need I remind you, Ristic developed ModSecurity, the original web application firewall, in 2002 and remains involved in the project to this day.

This book is a living entity as it is continually updated digitally; your purchase includes 1 year of digital updates. Ristic also wants to know what you think and will incorporate updates and feedback if relevant.

While the ModSecurity Handbook covers v2.5 and beyond, Ristic's is "the only ModSecurity book on the market that provides comprehensive coverage of all features, including those features that are only available in the development repository."

ModSecurity Handbook offers detailed technical guidance and is rules-centric in its approach including configuration, writing, rules sets, and Lua. Your purchase even includes a digital-only ModSecurity Rule Writing Workshop.

Chapter 10 is dedicated to performance as proper tuning is essential to success with ModSecurity without web application performance degradation.

That said, the highlight of this excellent read for your reviewer was Chapter 8, covering Persistent Storage.

ModSecurity persistent storage is, for all intents and purposes, a free-form database that helps you:

• Track IP address and session activity, attack, and anomaly scores

• Track user behavior over a long period of time

• Monitor for session issues including hijacking, inactivity timeouts and absolute life span

• Detect denial of service and brute force attacks

• Implement periodic alerting

Following the applied persistence model, I found periodic alerting most interesting and useful. From pg. 126, "Periodic alerting is a technique useful in the cases when it is enough to see one alert about a particular situation, and when further events would only create clutter. You can implement periodic alerting to work once per IP address, session, URL, or even an entire application."

This is the ModSecurity equivalent of a Snort IDS rule header pass action useful when internal vulnerability scanners might cause an excess of alerts.

ModSecurity rules that perform passive vulnerability scanning might detect traces of vulnerabilities in output, and alert on them. Periodic alerting would thus only alert once when configured accordingly.

As an example, perhaps you are aware of minor issues that are important to be aware of, but do not require an alert on every web server hit.

Making use of the GLOBAL collection, ModSecurity Handbook's example would translate the scenario above by following a chained rule match and defining a variable, thus telling you if an alert has fired in a previously. The presence of the variable indicates that an alert shouldn’t fire again for a rule-defined period of time. In concert with expiration and counter resets it is ensured that a rule will warn you only once in a your preferred period of time but still log as you see fit too.

Useful, right?

ModSecurity Handbook, in concert with Ristic's Apache Security, are must reads for web application security administrators and architects, but will not leave those who need step-by-step instructions at a loss.

Trust me when I say, all you need to harden your web presence with ModSecurity is at your fingertips with the ModSecurity Handbook.

Cheers and happy reading.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Thursday, June 03, 2010

Web Security Tools²: skipfish and iScanner

June's toolsmith in the ISSA Journal covers skipfish and iScanner.

Skipfish and iScanner, albeit quite different, are both definite additions for your toolkits.

Reduction of web application security flaws as well as the identification and removal of obfuscated malcode are important ongoing processes as part of your proactive and reactive defensive measures.

Skipfish is an “active web application security reconnaissance tool that prepares an interactive sitemap for the targeted site by carrying out a recursive crawl and dictionary-based probes.”

iScanner is a Ruby-based tool that “detects and removes malicious code and webpages malware from your website with automated ease. iScanner will not only show you the infected files from your server but it’s also able to clean these files by removing the malware code from the infected files.”

The article awaits your review here.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Skipfish and iScanner, albeit quite different, are both definite additions for your toolkits.

Reduction of web application security flaws as well as the identification and removal of obfuscated malcode are important ongoing processes as part of your proactive and reactive defensive measures.

Skipfish is an “active web application security reconnaissance tool that prepares an interactive sitemap for the targeted site by carrying out a recursive crawl and dictionary-based probes.”

iScanner is a Ruby-based tool that “detects and removes malicious code and webpages malware from your website with automated ease. iScanner will not only show you the infected files from your server but it’s also able to clean these files by removing the malware code from the infected files.”

The article awaits your review here.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Thursday, April 01, 2010

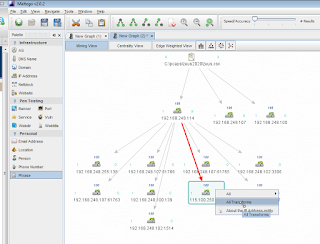

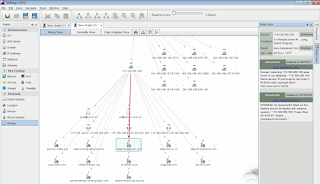

Malware behavior analysis: studying PCAPs with Maltego local transforms

In recent months I've made regular use of Maltego during security data visualization efforts specific to investigations and analysis.

While Maltego includes numerous highly useful entities and transforms, it does not currently feature the ability to directly manipulate native PCAP files.

This is not entirely uncommon amongst other tools, particularly those specific to visualization; often such tools consume CSV files.

With thanks to Andrew MacPherson of Paterva for creating these for me upon request for recent presentations, I'm pleased to share with you Maltego local transforms that will render CSVs created from PCAP files. Simple, but extremely useful.

I'll take you step by step through the process, starting with creating CSVs from PCAPs.

For those of you already comfortable with PCAP to CSV conversion and/or using local transforms with Maltego, here are the pyCSV transforms:

GetSourceClients.py

GetDestinationClients.py

All others, read on.

Raffael Marty's AfterGlow (version 1.6 just released) includes tcpdump2csv.pl which uses tcpdump/windump to read a PCAP file and parse it into parametrized CSV output.

Windows users, assuming that Perl is installed and all files and scripts reside in the same directory, execute:

windump -vttttnnelr example.pcap | perl tcpdump2csv.pl "sip dip dport" > example.csv.

Linux users:

tcpdump -vttttnnelr example.pcap | tcpdump2csv.pl "sip dip dport" > example.csv

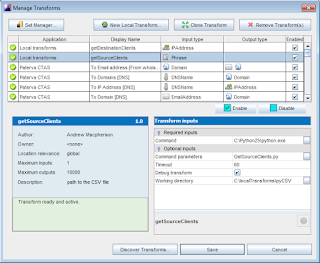

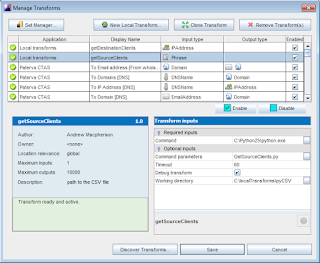

To integrate the pyCSV local transforms with your Maltego instance:

1. Click Tools, then Manage Transforms.

2. Click New Local Transforms.

3. Define the Display name as the name of the local transform. Example: GetSourceClients

4. Each transform must map to an entity. Do so as follows for

each transform as you create it:

getSourceClients to Phrase

getDestinationClients to IP Address

5. Click Next.

6. The Command field should point to Python binary (C:\Python25\python.exe on Windows, /usr/bin/python

on Ubuntu 9.10).

7. The Parameters field should refer only to the transform name. Example: GetSourceClients.py

8. Work Directory should be the complete path to the directory where you keep the Nmap local transform Python scripts (suggest C:\localTransforms\pyCSV for Windows users).

9. Finish, then Save.

You'll also need a copy MaltegoTransform.py in your local transforms directory (included with the Maltego python lib during installation).

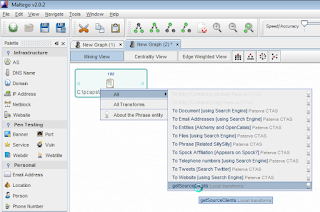

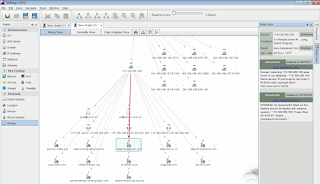

During a recent Zeus bot investigation, I used GetSourceClients.py and GetDestinationClients.py as follows:

1) Convert zeus.pcap, captured during malware analysis in a virtual ennvironment,

to zeus.csv: tcpdump -vttttnnelr zeus.pcap | tcpdump2csv.pl "sip dip dport" > zeus.csv

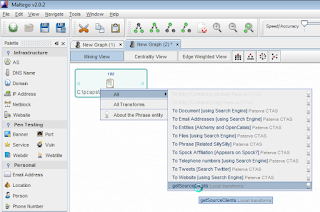

2) Drag a Phrase entity into the Maltego workspace, and using CopyPath, pasted the full path to the zeus.csv into the Phrase entity.

3) Right-clicked the Phrase entity, chose All, then getSourceClients.

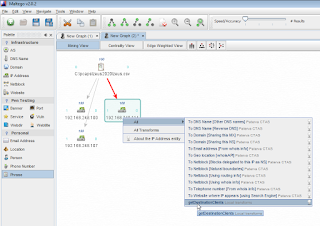

4) Right-clicked the IP entity created for my infected host, chose All, then getDestinationClients.

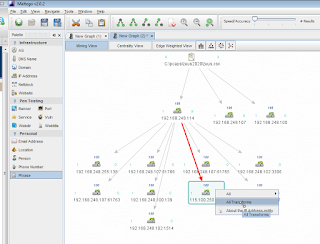

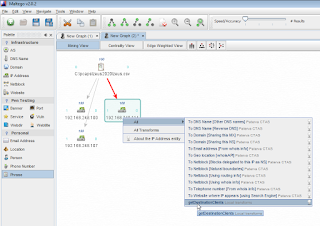

5) Egress traffic to a likely malicious host immediately jumped out of the Maltego workspace at me. I right-clicked (after removing the port reference in the IP entity label) and selected AllTransforms.

6) Maltego's results were swift and validated my immediate assumption. 115.100.250.105 is a malicious Chinese (omg, really?) Zeus C&C server. Nice.

Highlighting a Website entity then choosing Detail View will tell you everything you need to know.

"Size matters not. Look at me. Judge me by my size, do you? Hmm? Hmm. And well you should not. For my ally is Maltego, and a powerful ally it is."

Yoda's right. ;-)

If you have any questions or would like saved transforms, PCAPs, or binary samples, ping me at russ at holisticinfosec dot org.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

While Maltego includes numerous highly useful entities and transforms, it does not currently feature the ability to directly manipulate native PCAP files.

This is not entirely uncommon amongst other tools, particularly those specific to visualization; often such tools consume CSV files.

With thanks to Andrew MacPherson of Paterva for creating these for me upon request for recent presentations, I'm pleased to share with you Maltego local transforms that will render CSVs created from PCAP files. Simple, but extremely useful.

I'll take you step by step through the process, starting with creating CSVs from PCAPs.

For those of you already comfortable with PCAP to CSV conversion and/or using local transforms with Maltego, here are the pyCSV transforms:

GetSourceClients.py

GetDestinationClients.py

All others, read on.

Raffael Marty's AfterGlow (version 1.6 just released) includes tcpdump2csv.pl which uses tcpdump/windump to read a PCAP file and parse it into parametrized CSV output.

Windows users, assuming that Perl is installed and all files and scripts reside in the same directory, execute:

windump -vttttnnelr example.pcap | perl tcpdump2csv.pl "sip dip dport" > example.csv.

Linux users:

tcpdump -vttttnnelr example.pcap | tcpdump2csv.pl "sip dip dport" > example.csv

To integrate the pyCSV local transforms with your Maltego instance:

1. Click Tools, then Manage Transforms.

2. Click New Local Transforms.

3. Define the Display name as the name of the local transform. Example: GetSourceClients

4. Each transform must map to an entity. Do so as follows for

each transform as you create it:

getSourceClients to Phrase

getDestinationClients to IP Address

5. Click Next.

6. The Command field should point to Python binary (C:\Python25\python.exe on Windows, /usr/bin/python

on Ubuntu 9.10).

7. The Parameters field should refer only to the transform name. Example: GetSourceClients.py

8. Work Directory should be the complete path to the directory where you keep the Nmap local transform Python scripts (suggest C:\localTransforms\pyCSV for Windows users).

9. Finish, then Save.

You'll also need a copy MaltegoTransform.py in your local transforms directory (included with the Maltego python lib during installation).

During a recent Zeus bot investigation, I used GetSourceClients.py and GetDestinationClients.py as follows:

1) Convert zeus.pcap, captured during malware analysis in a virtual ennvironment,

to zeus.csv: tcpdump -vttttnnelr zeus.pcap | tcpdump2csv.pl "sip dip dport" > zeus.csv

2) Drag a Phrase entity into the Maltego workspace, and using CopyPath, pasted the full path to the zeus.csv into the Phrase entity.

3) Right-clicked the Phrase entity, chose All, then getSourceClients.

4) Right-clicked the IP entity created for my infected host, chose All, then getDestinationClients.

5) Egress traffic to a likely malicious host immediately jumped out of the Maltego workspace at me. I right-clicked (after removing the port reference in the IP entity label) and selected AllTransforms.

6) Maltego's results were swift and validated my immediate assumption. 115.100.250.105 is a malicious Chinese (omg, really?) Zeus C&C server. Nice.

Highlighting a Website entity then choosing Detail View will tell you everything you need to know.

"Size matters not. Look at me. Judge me by my size, do you? Hmm? Hmm. And well you should not. For my ally is Maltego, and a powerful ally it is."

Yoda's right. ;-)

If you have any questions or would like saved transforms, PCAPs, or binary samples, ping me at russ at holisticinfosec dot org.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Sunday, March 21, 2010

Presentations available: RSA, ISACA, and Agora

It's been a busy month of presentations including RSA Conference 2010, ISACA Puget Sound, and the Agora.

The Agora is a "successful strategic association that meets quarterly to bring together the pacific Northwest's top information systems security professionals and technical experts, as well as officers from the private sector, public agencies, local, state and federal government and law enforcement."

At RSA and Agora I discussed tactics intended to compare security data visualization to strictly textual output generated by IDS/IPS. These discussions included details on AfterGlow, Rumint, NetGrok, and Maltego.

At the ISACA Puget Sound chapter meeting I covered securing the company web presence (common security threats to your web presence and what you can do about it). This talk included details specific to the OWASP Top 10 and the CWE/SANS Top 25.

The RSA presentation is here.

The ISACA presentation is here.

The Agora presentation is available upon request (russ at holisticinfosec dot org).

There are PCAPS, scripts, and binary samples discussed in all of these presentations. Should you wish copies of any or all, please contact me.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

The Agora is a "successful strategic association that meets quarterly to bring together the pacific Northwest's top information systems security professionals and technical experts, as well as officers from the private sector, public agencies, local, state and federal government and law enforcement."

At RSA and Agora I discussed tactics intended to compare security data visualization to strictly textual output generated by IDS/IPS. These discussions included details on AfterGlow, Rumint, NetGrok, and Maltego.

At the ISACA Puget Sound chapter meeting I covered securing the company web presence (common security threats to your web presence and what you can do about it). This talk included details specific to the OWASP Top 10 and the CWE/SANS Top 25.

The RSA presentation is here.

The ISACA presentation is here.

The Agora presentation is available upon request (russ at holisticinfosec dot org).

There are PCAPS, scripts, and binary samples discussed in all of these presentations. Should you wish copies of any or all, please contact me.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Monday, March 01, 2010

Financials and the need for software regression testing

SearchFinancialSecurity.com just published my article regarding Financials and the need for software regression testing.

This article cites Ameriprise as an example of a financial services provider who would benefit from improved regression testing and version control.

This article was actually written prior to the recent SQL bug I discussed involving Ameriprise, and is made even more interesting by discussion of a possible small, unrelated Ameriprise data breach in New Hampshire.

I truly hope Ameriprise takes a close look at the suggestions offered and moves towards enhancing security practices on behalf of their consumers.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

This article cites Ameriprise as an example of a financial services provider who would benefit from improved regression testing and version control.

This article was actually written prior to the recent SQL bug I discussed involving Ameriprise, and is made even more interesting by discussion of a possible small, unrelated Ameriprise data breach in New Hampshire.

I truly hope Ameriprise takes a close look at the suggestions offered and moves towards enhancing security practices on behalf of their consumers.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Tuesday, February 23, 2010

Online finance flaw: Ameriprise III - please make it stop

NOTE: This issue was disclosed responsibly and repaired accordingly.

"Now what?", you're probably saying. Ameriprise again? Yep.

I really wasn't trying this time. Really.

There I was, just sitting in the man cave, happily writing an article on version control and regression testing.

As the Ameriprise cross-site scripting (XSS) vulnerabilities from August 2009 and January 2010 were in scope for the article topic, due diligence required me to go back and make sure the issue hadn't re-resurfaced. ;-)

I accidentally submitted the JavaScript test payload to the wrong parameter.

What do you think happened next?

Nothing good.

I reduced the test string down to a single tic to validate the simplicity of the shortcoming; same result.

At the least, this is ridiculous information disclosure, if not leaning heavily towards a SQL injection vulnerability.

As we learned the last two times we discussed Ameriprise, the only way to report security vulnerabilities is via their PR department, specifically to Benjamin Pratt, VP of Public Communications.

Alrighty then, issue reported and quickly fixed this time (same day)...until some developer rolls back to an old code branch or turns on debugging again.

We all know the ColdFusion is insanely verbose, particularly when in left in debugging mode, but come now...really?

I really didn't want to know the exact SQL query and trigonometry required to locate an Ameriprise advisor.

Although, after all this, I can comfortably say I won't be seeking an Ameriprise advisor anyway.

Please Mr. Pratt, tell your web application developers to make it stop.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

"Now what?", you're probably saying. Ameriprise again? Yep.

I really wasn't trying this time. Really.

There I was, just sitting in the man cave, happily writing an article on version control and regression testing.

As the Ameriprise cross-site scripting (XSS) vulnerabilities from August 2009 and January 2010 were in scope for the article topic, due diligence required me to go back and make sure the issue hadn't re-resurfaced. ;-)

I accidentally submitted the JavaScript test payload to the wrong parameter.

What do you think happened next?

Nothing good.

I reduced the test string down to a single tic to validate the simplicity of the shortcoming; same result.

At the least, this is ridiculous information disclosure, if not leaning heavily towards a SQL injection vulnerability.

As we learned the last two times we discussed Ameriprise, the only way to report security vulnerabilities is via their PR department, specifically to Benjamin Pratt, VP of Public Communications.

Alrighty then, issue reported and quickly fixed this time (same day)...until some developer rolls back to an old code branch or turns on debugging again.

We all know the ColdFusion is insanely verbose, particularly when in left in debugging mode, but come now...really?

I really didn't want to know the exact SQL query and trigonometry required to locate an Ameriprise advisor.

Although, after all this, I can comfortably say I won't be seeking an Ameriprise advisor anyway.

Please Mr. Pratt, tell your web application developers to make it stop.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Sunday, September 20, 2009

CSRF attacks and forensic analysis

Cross-site request forgery (CSRF) attacks exhibit an oft misunderstood yet immediate impact on the victim (not to mention the organization they work for) whose browser has just performed actions they did not intend, on behalf of the attacker.

Consider the critical infrastructure operator performing administrative actions via poorly coded web applications, who unknowingly falls victim to a spear phishing attack. The result is a CSRF-born attack utilized to create an administrative account on the vulnerable platform, granting the attacker complete control over a resource that might manage the likes of a nuclear power plant or a dam (pick your poison).

Enough of an impact statement for you?

There's another impact, generally less considered but no less important, resulting from CSRF attacks: they occur as attributable to the known good user, and in the context of an accepted browser session.

Thus, how is an investigator to fulfill her analytical duties once and if CSRF is deemed to be the likely attack vector?

I maintain two views relevant to this question.

The first is obvious. Vendors and developers should produce web applications that are not susceptible to CSRF attacks. Further, organizations, particularly those managing critical infrastructure and data with high business impact or personally identifiable information (PII), must conduct due diligence to ensure that products used to provide their service must be securely developed.

The second view places the responsibility squarely on the same organization to:

1) capture verbose and detailed web logs (especially the referrer)

2) stored and retained browser histories and/or internet proxy logs for administrators who use hardened, monitored workstations, ideally with little or no internet access

Strong, clarifying policies and procedures are recommended to ensure both 1 & 2 are successful efforts.

DETAILED DISCUSSION

Web logs

Following is an attempt to clarify the benefits of verbose logging on web servers as pertinent to CSRF attack analysis, particularly where potentially vulnerable web applications (all?) are served. The example is supported by the correlative browser history. I've anonymized all examples to protect the interests of applications that are still pending repair.

A known good request for an web application administrative function as seen in Apache logs might appear as seen in Figure 1.

Figure 1

As expected, the referrer is http://192.168.248.102/victimApp/?page=admin, a local host making a request via the appropriate functionality provided by the application as expected.

However, if an administrator has fallen victim to a spear phishing attempt intended to perform the same function via a CSRF attack, the log entry might appear as seen in Figure 2.

Figure 2

In Figure 2, although the source IP is the same as the known good request seen in Figure 1, it's clear that the request originated from an unexpected location, specifically http://badguy.com/poc/postCSRFvictimApp.html as seen in the referrer field.

Most attackers won't be so accommodating as to name their attack script something like postCSRFvictimApp.html, but the GET/POST should still stand out via the referrer field.

Browser history or proxy logs

Assuming time stamp matching and enforced browser history retention or proxy logging (major assumptions, I know), the log entries above can also be correlated. Consider the Firefox history summary seen in Figure 3.

Figure 3

The sequence of events shows the browser having made a request to badguy.com followed by the addition of a new user via the vulnerable web applications add user administrative function.

RECOMMENDATIONS

1) Enable the appropriate logging levels and format, and ensure that the referrer field is always captured.

For Apache servers consider the following log format:

LogFormat "%h %l %u %t \"%r\" %>s %b \"%{Referer}i\" \"%{User-agent}i\"" combined

CustomLog log/access_log combined

For IIS servers be sure to enable cs(Referer) logging via IIS Manager.

Please note that it is not enabled by default in IIS and that W3C Extended Log File Format is required.

2) Retain and monitor browser histories and/or internet proxy logs for administrators who conduct high impact administrative duties via web applications. Ideally, said administrators should use hardened, monitored workstations, with little or no internet access.

3) Provide enforced policies and procedures to ensure that 1 & 2 are undertaken successfully.

Feedback welcome, as always, via comments or email.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Consider the critical infrastructure operator performing administrative actions via poorly coded web applications, who unknowingly falls victim to a spear phishing attack. The result is a CSRF-born attack utilized to create an administrative account on the vulnerable platform, granting the attacker complete control over a resource that might manage the likes of a nuclear power plant or a dam (pick your poison).

Enough of an impact statement for you?

There's another impact, generally less considered but no less important, resulting from CSRF attacks: they occur as attributable to the known good user, and in the context of an accepted browser session.

Thus, how is an investigator to fulfill her analytical duties once and if CSRF is deemed to be the likely attack vector?

I maintain two views relevant to this question.

The first is obvious. Vendors and developers should produce web applications that are not susceptible to CSRF attacks. Further, organizations, particularly those managing critical infrastructure and data with high business impact or personally identifiable information (PII), must conduct due diligence to ensure that products used to provide their service must be securely developed.

The second view places the responsibility squarely on the same organization to:

1) capture verbose and detailed web logs (especially the referrer)

2) stored and retained browser histories and/or internet proxy logs for administrators who use hardened, monitored workstations, ideally with little or no internet access

Strong, clarifying policies and procedures are recommended to ensure both 1 & 2 are successful efforts.

DETAILED DISCUSSION

Web logs

Following is an attempt to clarify the benefits of verbose logging on web servers as pertinent to CSRF attack analysis, particularly where potentially vulnerable web applications (all?) are served. The example is supported by the correlative browser history. I've anonymized all examples to protect the interests of applications that are still pending repair.

A known good request for an web application administrative function as seen in Apache logs might appear as seen in Figure 1.

Figure 1

As expected, the referrer is http://192.168.248.102/victimApp/?page=admin, a local host making a request via the appropriate functionality provided by the application as expected.

However, if an administrator has fallen victim to a spear phishing attempt intended to perform the same function via a CSRF attack, the log entry might appear as seen in Figure 2.

Figure 2

In Figure 2, although the source IP is the same as the known good request seen in Figure 1, it's clear that the request originated from an unexpected location, specifically http://badguy.com/poc/postCSRFvictimApp.html as seen in the referrer field.

Most attackers won't be so accommodating as to name their attack script something like postCSRFvictimApp.html, but the GET/POST should still stand out via the referrer field.

Browser history or proxy logs

Assuming time stamp matching and enforced browser history retention or proxy logging (major assumptions, I know), the log entries above can also be correlated. Consider the Firefox history summary seen in Figure 3.

Figure 3

The sequence of events shows the browser having made a request to badguy.com followed by the addition of a new user via the vulnerable web applications add user administrative function.

RECOMMENDATIONS

1) Enable the appropriate logging levels and format, and ensure that the referrer field is always captured.

For Apache servers consider the following log format:

LogFormat "%h %l %u %t \"%r\" %>s %b \"%{Referer}i\" \"%{User-agent}i\"" combined

CustomLog log/access_log combined

For IIS servers be sure to enable cs(Referer) logging via IIS Manager.

Please note that it is not enabled by default in IIS and that W3C Extended Log File Format is required.

2) Retain and monitor browser histories and/or internet proxy logs for administrators who conduct high impact administrative duties via web applications. Ideally, said administrators should use hardened, monitored workstations, with little or no internet access.

3) Provide enforced policies and procedures to ensure that 1 & 2 are undertaken successfully.

Feedback welcome, as always, via comments or email.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Thursday, August 20, 2009

Amex II: Ameriprise mishandles disclosure too

Yet another online finance flaw for your consideration.

Remember the American Express issue?

Apparently the negligence and ignorance of the parent has been inherited by the child.

It took me pinging Dan Goodin at The Register and asking him to shake Ameriprise out of their slumber to address the most commonplace, simple, web application bug of all: XSS. Really? Still?

Dan did a bang up job of the task at hand; it was fixed within hours. Ameriprise had ignored my multiple attempts to disclose over five months. Power of the press, eh?

The story is here.

I also owe Laura Wilson at Information Security Resources for alerting me to likely issues with Ameriprise.

I'm tired of having to say it. It's even gotten to the place where readers get pissed at me because I keep stressing the point. But I shouldn't have to.

Major financial providers should not be ignoring reports of common web application vulnerabilities sent in via all their available channels.

Major financial providers should be reviewing their web sites and their code at regular intervals, proactively preventing these issues.

Blah, blah, blah...you can't hack a server with XSS.

If you attended BlackHat or Defcon a few weeks ago, you may realize how much less relevant that argument is.

Check out the XAB, Firefox extensions, and evasion discussions.

You can be pwned through XSS.

Do I need to stress compliance again? Amex touts itself as a founding PCI partner, yet here we go again.

Vendors and developers need to get smarter, faster, and more responsive to security related notifications, particularly with regard to their websites.

To that end, keep an eye on the Data Security Podcast. Ira Victor and I have hatched a scheme to promote the use of proper disclosure handling by website operators such as major financial services providers. He'll also be posting podcasted discussions we've had regarding the disclosure issues, as well as the forensic challenges presented by CSRF attacks (another easily avoided, common web application vulnerability).

I'll also be talking about a pending ISO standard for disclosure that I hope will begin to drive enterprise adoption of improved disclosure handling.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Remember the American Express issue?

Apparently the negligence and ignorance of the parent has been inherited by the child.

It took me pinging Dan Goodin at The Register and asking him to shake Ameriprise out of their slumber to address the most commonplace, simple, web application bug of all: XSS. Really? Still?

Dan did a bang up job of the task at hand; it was fixed within hours. Ameriprise had ignored my multiple attempts to disclose over five months. Power of the press, eh?

The story is here.

I also owe Laura Wilson at Information Security Resources for alerting me to likely issues with Ameriprise.

I'm tired of having to say it. It's even gotten to the place where readers get pissed at me because I keep stressing the point. But I shouldn't have to.

Major financial providers should not be ignoring reports of common web application vulnerabilities sent in via all their available channels.

Major financial providers should be reviewing their web sites and their code at regular intervals, proactively preventing these issues.

Blah, blah, blah...you can't hack a server with XSS.

If you attended BlackHat or Defcon a few weeks ago, you may realize how much less relevant that argument is.

Check out the XAB, Firefox extensions, and evasion discussions.

You can be pwned through XSS.

Do I need to stress compliance again? Amex touts itself as a founding PCI partner, yet here we go again.

Vendors and developers need to get smarter, faster, and more responsive to security related notifications, particularly with regard to their websites.

To that end, keep an eye on the Data Security Podcast. Ira Victor and I have hatched a scheme to promote the use of proper disclosure handling by website operators such as major financial services providers. He'll also be posting podcasted discussions we've had regarding the disclosure issues, as well as the forensic challenges presented by CSRF attacks (another easily avoided, common web application vulnerability).

I'll also be talking about a pending ISO standard for disclosure that I hope will begin to drive enterprise adoption of improved disclosure handling.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Friday, August 14, 2009

Linux Magazine: Tools for Visualizing IDS Output

The September 2009 issue (106) of Linux Magazine features a cover story I've written that I freely admit I'm very proud of. Tools for Visualizing IDS Output is an extensive, comparative study of malicious PCAPs as interpreted by the Snort IDS output versus the same PCAPs rendered by a variety of security data visualization tools. The Snort rules utilized are, of course, the quintessential ET rules from Matt Jonkman's EmergingThreats.net. This article exemplifies the power and beauty of two disciplines I've long favored: network security monitoring and security data visualization.

Excerpt:

The flood of raw data generated by intrusion detection systems (IDS) is often overwhelming for security specialists, and telltale signs of intrusion are sometimes overlooked in all the noise. Security visualization tools provide an easy, intuitive means for sorting through the dizzying data and spotting patterns that might indicate intrusion. Certain analysis and detection tools use PCAP, the Packet Capture library, to capture traffic. Several PCAP-enabled applications are capable of saving the data collected during a listening session into a PCAP file, which is then read and analyzed with other tools. PCAP files offer a convenient means for preserving and replaying intrusion data. In this article, I'll use PCAPs to explore a few popular free visualization tools.For each scenario, I’ll show you how the

attack looks to the Snort intrusion detection system, then I’ll describe how the same incident would appear through a security visualization application.

The article gives DAVIX its rightful due, but also covers a tool to be included in the next DAVIX release called NetGrok. If you're not familiar with NetGrok, visit the site, download the tool and prepare to be amazed.

I'll be presenting this work and research at the Seattle Secureworld Expo on October 28th at 3pm. If you're in the area, hope to see you there.

This issue of Linux Magazine is on news stands now, grab a copy while you can. It includes Ubuntu and Kubuntu 9.04 on DVD so it's well worth the investment.

Grab NetGrok at your earliest convenience and let m know what you think.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Thursday, August 06, 2009

AppRiver: SaaS security provider sets standard for rapid response

On July 28th I was happily catching up on my RSS feeds before getting ready to head of to Las Vegas for DEFCON when a Dark Reading headline caught my eye.

Tim Wilson's piece, After Years Of Struggle, SaaS Security Market Finally Catches Fire, drew me in for two reasons.

I'm a fan of certain SaaS Security products (SecureWorks), but I also like to pick on SaaS/cloud offerings for not shoring up their security as much as they should.

The second page of Tim's article described AppRiver, the "Messaging Experts" as one of some smaller service providers who have created a dizzying array of offerings to choose from.

That was more than enough impetus to go sniffing about, and sure enough, your basic, run-of-the-mill XSS vulnerabilities popped up almost immediately.

Before...

After...

Not likely an issue a SaaS security provider wants to leave unresolved, and here's where the story brightens up in an extraordinarily refreshing way.

If I tried, in my wildest imagination, I couldn't realize a better disclosure response than what follows as conducted by AppRiver AND SmarterTools.

Simply stunning.

Let me provide the exact time line for you:

1) July 28, 9:49pm: Received automated response from support at appriver.com after disclosing vulnerability via their online form.

2) July 28, 9:55pm: Received a human response from support team lead Nicky F. seeking more information "so we can look into this".

(SIX MINUTES AFTER MY DISCLOSURE)

3) July 28, 10:27pm: Received a phone call from Scott at AppRiver to make sure they clearly understand the issue for proper escalation.

(NOW SHAKING MY HEAD IN AMAZEMENT)

4) July 29, 6:35am: Received an email from Scottie, an AppRiver server engineer, seeking yet more details.

5) July 29, 8:51 & 8:59am: Received a voicemail and email from Scottie to let me know that one of the vulnerabilities I'd discovered was part of 3rd party (SmarterTools) code AppRiver was using to track support requests.

(MORE ON THIS IN A BIT)

6) July 29, 2:08pm: Received email from Steve M., AppRiver software architect, who stated that:

a) "We deployed anti-XSS code today as a fix and are using scanning tools and tests to analyze our other web applications to ensure nothing else has slipped through the cracks. We do employ secure coding practices in our development department and take these matters seriously. We appreciate your help and are going to use this as an opportunity to focus our development teams on the necessity and best practices of secure coding."

b) "Regarding XSS vulnerabilities you detected in the SmarterTrack application (the above mentioned 3rd party tracking app) from SmarterTools, one of our lead Engineers and myself called them this morning explaining the vulnerability and requesting an update to fix the problem. We also relayed to them that a security professional had discovered the vulnerability and would be contacting them to discuss it further."

(I AM NOW SPEECHLESS WATCHING APPRIVER HANDLE THIS DISCLOSURE)

NOTE: Less than 24 hours after my initial report, the vulnerabilities that AppRiver had direct ownership of were repaired.

7) July 29, 4:17pm: Received an email from Andrew W at SmarterTools (3rd party tracking app vendor) who stated "thank you for pointing this out to us... we will be releasing a build within the next week to resolve these issues."

(CLEARLY STATED INTENTIONS)

8) August 4, 8:02am: Received another email from Andrew W at SmarterTools who stated "we plan to release our next build tomrrow morning. (Wednesday GMT + 7) I will let you know as soon as it becomes available for download on our site."

(CLARIFYING EXACTLY WHAT THEY SAID THEY WERE GOING TO DO)

9) August 5, 9:37am: Received another email from Andrew W at SmarterTools stating that "a new version of SmarterTrack is now available via our website. (v 4.0.3504) This version includes a fix to the security issues you reported."

(DID EXACTLY WHAT THEY SAID THEY WERE GOING TO DO)

10) The resulting SmarterTools SmarterTrack vulnerability advisory was released yesterday on my Research pages: HIO-2009-0728

I must reiterate.

This is quite simply the new bar for response to vulnerability disclosures.

It is further amazing that such a process was followed by not one, but two vendors.

I am not a customer of either of these vendors but can clearly state this: if I required services offered by AppRiver and SmarterTools, I would sign up without hesitation.

AppRiver and SmarterTools, yours is the standard to be met by others. Should other vendors utilize even a modicum of your response and engagement process, the Internet at large would be a safer place.

Well done to you both.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Tim Wilson's piece, After Years Of Struggle, SaaS Security Market Finally Catches Fire, drew me in for two reasons.

I'm a fan of certain SaaS Security products (SecureWorks), but I also like to pick on SaaS/cloud offerings for not shoring up their security as much as they should.

The second page of Tim's article described AppRiver, the "Messaging Experts" as one of some smaller service providers who have created a dizzying array of offerings to choose from.

That was more than enough impetus to go sniffing about, and sure enough, your basic, run-of-the-mill XSS vulnerabilities popped up almost immediately.

Before...

After...

Not likely an issue a SaaS security provider wants to leave unresolved, and here's where the story brightens up in an extraordinarily refreshing way.

If I tried, in my wildest imagination, I couldn't realize a better disclosure response than what follows as conducted by AppRiver AND SmarterTools.

Simply stunning.

Let me provide the exact time line for you:

1) July 28, 9:49pm: Received automated response from support at appriver.com after disclosing vulnerability via their online form.

2) July 28, 9:55pm: Received a human response from support team lead Nicky F. seeking more information "so we can look into this".

(SIX MINUTES AFTER MY DISCLOSURE)

3) July 28, 10:27pm: Received a phone call from Scott at AppRiver to make sure they clearly understand the issue for proper escalation.

(NOW SHAKING MY HEAD IN AMAZEMENT)

4) July 29, 6:35am: Received an email from Scottie, an AppRiver server engineer, seeking yet more details.

5) July 29, 8:51 & 8:59am: Received a voicemail and email from Scottie to let me know that one of the vulnerabilities I'd discovered was part of 3rd party (SmarterTools) code AppRiver was using to track support requests.

(MORE ON THIS IN A BIT)

6) July 29, 2:08pm: Received email from Steve M., AppRiver software architect, who stated that:

a) "We deployed anti-XSS code today as a fix and are using scanning tools and tests to analyze our other web applications to ensure nothing else has slipped through the cracks. We do employ secure coding practices in our development department and take these matters seriously. We appreciate your help and are going to use this as an opportunity to focus our development teams on the necessity and best practices of secure coding."

b) "Regarding XSS vulnerabilities you detected in the SmarterTrack application (the above mentioned 3rd party tracking app) from SmarterTools, one of our lead Engineers and myself called them this morning explaining the vulnerability and requesting an update to fix the problem. We also relayed to them that a security professional had discovered the vulnerability and would be contacting them to discuss it further."

(I AM NOW SPEECHLESS WATCHING APPRIVER HANDLE THIS DISCLOSURE)

NOTE: Less than 24 hours after my initial report, the vulnerabilities that AppRiver had direct ownership of were repaired.

7) July 29, 4:17pm: Received an email from Andrew W at SmarterTools (3rd party tracking app vendor) who stated "thank you for pointing this out to us... we will be releasing a build within the next week to resolve these issues."

(CLEARLY STATED INTENTIONS)

8) August 4, 8:02am: Received another email from Andrew W at SmarterTools who stated "we plan to release our next build tomrrow morning. (Wednesday GMT + 7) I will let you know as soon as it becomes available for download on our site."

(CLARIFYING EXACTLY WHAT THEY SAID THEY WERE GOING TO DO)

9) August 5, 9:37am: Received another email from Andrew W at SmarterTools stating that "a new version of SmarterTrack is now available via our website. (v 4.0.3504) This version includes a fix to the security issues you reported."

(DID EXACTLY WHAT THEY SAID THEY WERE GOING TO DO)

10) The resulting SmarterTools SmarterTrack vulnerability advisory was released yesterday on my Research pages: HIO-2009-0728

I must reiterate.

This is quite simply the new bar for response to vulnerability disclosures.

It is further amazing that such a process was followed by not one, but two vendors.

I am not a customer of either of these vendors but can clearly state this: if I required services offered by AppRiver and SmarterTools, I would sign up without hesitation.

AppRiver and SmarterTools, yours is the standard to be met by others. Should other vendors utilize even a modicum of your response and engagement process, the Internet at large would be a safer place.

Well done to you both.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Wednesday, August 05, 2009

toolsmith: AIRT-Application for Incident Response Teams

My monthly toolsmith column in the August 2009 edition of the ISSA Journal features AIRT.

"AIRT is a web-based application that has been designed and developed to support the day to day operations of a computer security incident response team. The application supports highly automated processing of incident reports and facilitates coordination of multiple incidents by a security operations center."

Kees Leune had pointed me to his excellent offering after I'd sent him MIR-ROR for his consideration.

Incident response teams will find this app very useful for case management.

The article PDF is here.

Thanks to Kees for all his time and feedback while I was writing this month's article.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Sunday, August 02, 2009

DEFCON 17 Presentation and Videos Now Available

Mike and I presented CSRF: Yeah, It Still Works to a receptive DEFCON crowd, where we took specific platforms and vendors to task for failing to secure their offerings against cross-site request forgery (CSRF) attacks.

Dan Goodin from The Register did a nice write-up on the talk wherein he cleverly referred to some of the above mentioned as the Unholy Trinity. ;-) See if you can spot in the presentation slides why that reference is pretty funny.

For those of you who are interested in the talk but weren't able to attend, the presentation slides are here, and links to the associated videos are embedded in the appropriate slides. The videos are big AVI files so you'll be a lot happier downloading them.

I'll be following up on some very interesting questions that arose during Q&A after this talk, so stay tuned over the next few weeks for posts regarding sound token implementation, CSRF mitigation and Ruby, and the implications of CSRF attacks on forensic investigations.

Cheers.

del.icio.us | digg | Submit to Slashdot

Please support the Open Security Foundation (OSVDB)

Subscribe to:

Posts (Atom)

Moving blog to HolisticInfoSec.io

toolsmith and HolisticInfoSec have moved. I've decided to consolidate all content on one platform, namely an R markdown blogdown sit...

-

toolsmith and HolisticInfoSec have moved. I've decided to consolidate all content on one platform, namely an R markdown blogdown sit...

-

When, in October and November 's toolsmith posts, I redefined DFIR under the premise of D eeper F unctionality for I nvestigators in R ...

-

You can have data without information, but you cannot have information without data. ~Daniel Keys Moran Here we resume our discussion of ...